How people talk and understand each other has always fascinated me. The big question for me is how to develop technology that understands spoken language: how can we make automatic speech recognition more intelligent?. Besides what is said, there is also a lot of information in how something is said: aspects of physical, emotional, and mental states resonate in the voice, both consciously and unconsciously. I am particularly interested in the automatic interpretation of this implicit information, with the aim of, for example, enabling conversational agents (such as Siri) to respond more appropriately to children or older adults, or to develop apps that offer remote support to people suffering from depression.

After studying Linguistics (specialisation Language and Speech Technology) in Utrecht, I ended up at TNO where I investigated automatic emotion recognition in speech. Then I went to the University of Twente, Human Media Interaction where I am still working on the automatic analysis of nonverbal aspects in speech communication (e.g. laughing, backchanneling) in human-human, and human-machine interaction. Besides doing research I also teach speech processing, affective computing, and interaction technology.

Expertise

Computer Science

- Robot

- Detection

- Speech Emotion Recognition

- Annotation

Psychology

- Emotion

- Humans

- Behavior

- Conversation

Organisations

My research mainly focuses on automatically analyzing and interpreting nonverbal aspects in speech communication that say something about how the conversation is going, and what someone's physical, socio-emotional, and mental state is. My goal is to make automatic speech recognition more intelligent. Among other things, I have worked on automatic detection of laughter, automatic emotion recognition in speech, and automatic generation of backchannels for artificial agents. Currently, I am supervising a number of PhD students who are researching multimodal emotion expression in older adults, and responsible design for child-robot interaction. I am also supervising master students in their research on technology for vulnerable people (e.g. people with dementia, people with multiple disabilities), and human-robot interaction.

You can also read more about my research here https://www.utwente.nl/en/research/researchers/featured-scientists/truong/index/ and on my personal website http://khiettruong.space/

Publications

Research profiles

Affiliated study programs

Courses academic year 2023/2024

Courses in the current academic year are added at the moment they are finalised in the Osiris system. Therefore it is possible that the list is not yet complete for the whole academic year.

- 192166200 - Capita Selecta I-Tech

- 192199508 - Research Topics CS

- 192199968 - Internship CS

- 192199978 - Final Project CS

- 192399979 - Final Project BIT

- 201300058 - Research Topics BIT

- 201300059 - Internship BIT

- 201300086 - Research Topics 2 CS

- 201400171 - Capita Selecta Software Technology

- 201500371 - Capita Selecta BIT

- 201600017 - Final Project Preparation

- 201600073 - Affective Computing

- 201600075 - Speech Processing

- 201600080 - ARP in Affective Computing

- 201600082 - Advanced Speech Processing

- 201800234 - Foundations of Interaction Technology

- 201800236 - I-Tech Project

- 201800524 - Research Topics EIT

- 201900194 - Research Topics I-Tech

- 201900195 - Final Project I-Tech

- 201900200 - Final Project EMSYS

- 201900234 - Internship I-Tech

- 202001434 - Internship EMSYS

- 202001613 - MSc Final Project BIT + CS

- 202001614 - MSc Final Project CS + I-Tech

- 202001616 - Research Topics CS + I-TECH

- 202200251 - Capita Selecta DST

- 202300070 - Final Project EMSYS

Courses academic year 2022/2023

- 192166200 - Capita Selecta I-TECH

- 192199508 - Research Topics CS+IST

- 192199968 - Internship CS

- 192199978 - Final Project CS+IST

- 201300086 - Research Topics 2 CS+IST

- 201300294 - Master Thesis SEC Computer Science

- 201400171 - Capita Selecta Software Technology

- 201600017 - Final Project Preparation

- 201600073 - Affective Computing

- 201600075 - Speech Processing

- 201600080 - ARP in Affective Computing

- 201600082 - Advanced Speech Processing

- 201800234 - Foundations of Interaction Technology

- 201800236 - I-Tech Project

- 201800524 - Research Topics EIT

- 201900194 - Research Topics I-Tech

- 201900195 - Final Project I-Tech

- 201900200 - Final Project EMSYS

- 201900234 - Internship I-Tech

- 202000670 - Bachelor Assignment

- 202001434 - Internship EMSYS

- 202001495 - Supplementary Topics Create 2,5

- 202001613 - MSc Final Project BIT + CS

- 202001614 - MSc Final Project CS + I-Tech

- 202001616 - Research Topics CS + I-TECH

- 202200251 - Capita Selecta DST

- 202200377 - Internship I-Tech / Robotics

- 202200399 - Internship I-Tech / Robotics

Current projects

Advancing technology for multimodal analysis of emotion expression in dementia

Multimodal analysis of emotional expression in spoken memories of older adults, lifestory books, reminiscence therapy

Children and AI: talking trust and responsible spoken search

CHATTERS

Responsible design in child-robot-media interaction, spoken interaction between child and conversational agent

4TU Humans & Technology: Smart Social Systems and Spaces for Living Well

Social signal processing and affective computing in speech

Finished projects

EU-FP7 SQUIRREL (Clearing Clutter Bit by Bit)

Robot that helps children tidying up, social signal processing in child-robot interaction,

COMMIT P3 SENSEI

Exercise intensity detection through voice, running app

EU-FP7 SSPNet (Social Signal Processing Network)

Automatic analysis of laughter, backchannel generation, speech synchrony

In the press

https://www.bnnvara.nl/wegaanhetmaken/over

https://www.utwente.nl/en/news/2021/3/961766/ut-in-we-gaan-het-maken

https://www.universiteitvannederland.nl/college/kan-een-robot-de-emoties-in-jouw-stem-herkennen

Address

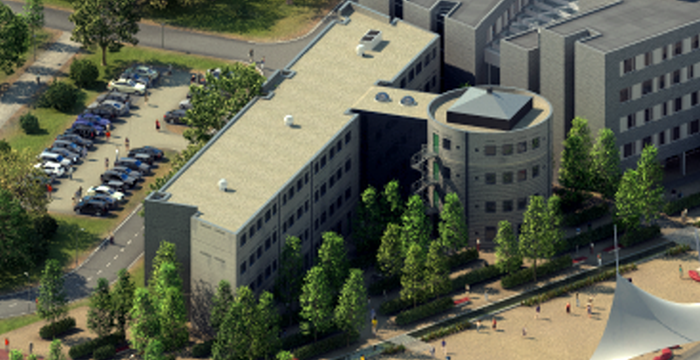

University of Twente

Citadel (building no. 09), room H235

Hallenweg 15

7522 NH Enschede

Netherlands

University of Twente

Citadel H235

P.O. Box 217

7500 AE Enschede

Netherlands